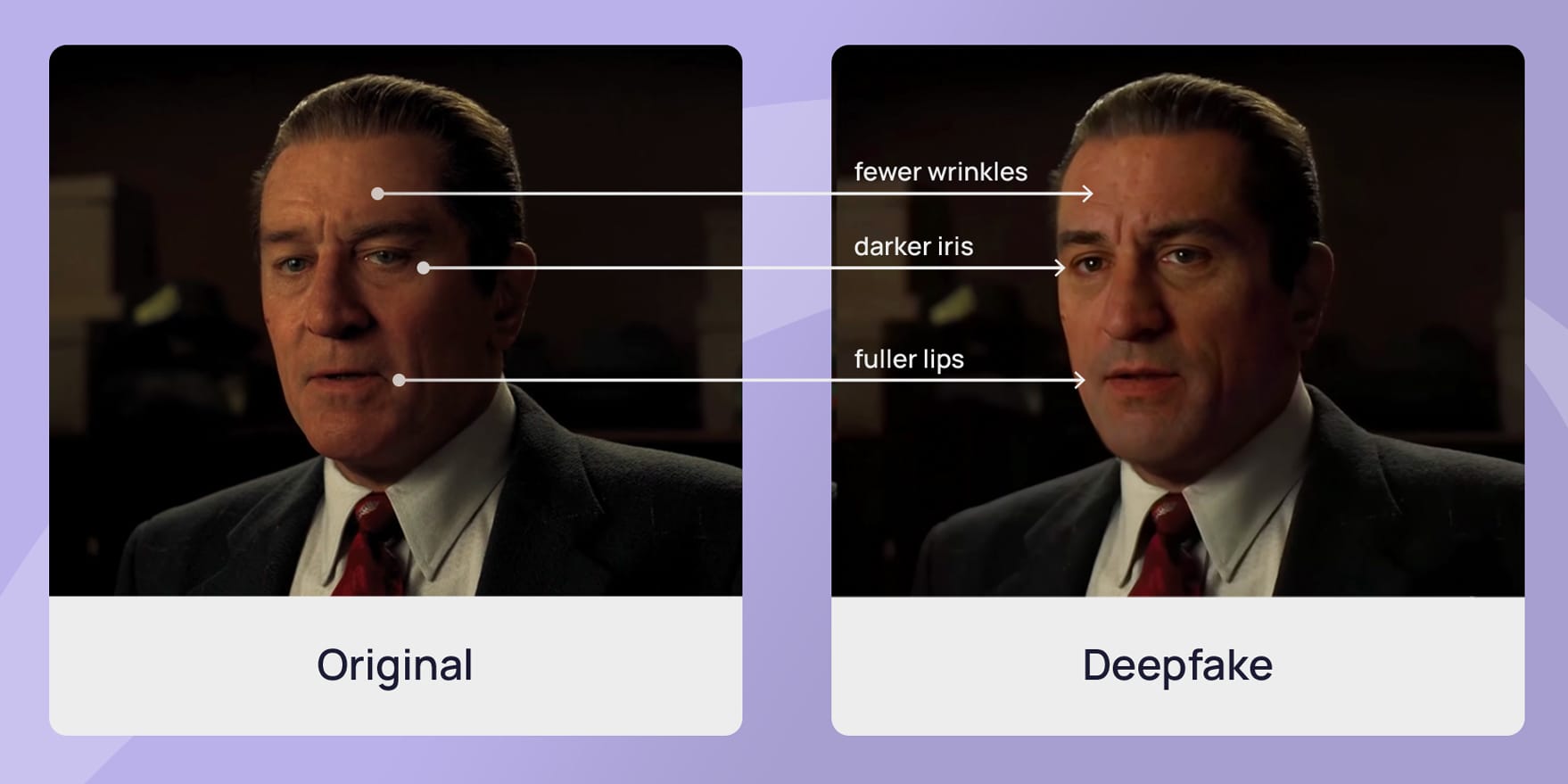

Deepfakes, Liability, and the Law: Who’s Responsible When AI Lies?

Seeing is believing.

That used to be a safe assumption.

Deepfakes have completely broken it.

With a few tools and the right data, anyone can generate hyper-realistic videos of people saying or doing things that never happened. Which leads to a legal headache that’s still unfolding:

When AI creates harm, who’s actually liable?

The Legal Problem: Too Many Possible Defendants

Deepfakes don’t come from a single source. There’s a chain:

- The developer who built the model

- The platform hosting the content

- The user who created and shared it

So when something goes wrong defamation, fraud, harassment

who takes the blame?

Right now, the law doesn’t give a clean answer.

Defamation Law Meets Synthetic Reality

Traditional defamation requires:

- A false statement

- About a real person

- Causing reputational harm

Deepfakes tick all three boxes easily.

But here’s the twist:

What if the creator is anonymous? Or the content goes viral before it can be traced?

Courts are being forced to stretch existing principles to cover digitally fabricated “speech.”

Platforms in the Hot Seat

Social media platforms are under increasing pressure to act.

In the US, laws like Section 230 of the Communications Decency Act have traditionally protected platforms from liability for user-generated content.

But deepfakes are testing that protection:

- Should platforms be responsible if they fail to remove harmful AI content?

- At what point does “hosting” become “facilitating”?

These are live questions with no settled answers.

The Rise of New Laws

Some jurisdictions aren’t waiting.

The EU AI Act, for example, introduces transparency obligations requiring certain AI-generated content to be clearly labelled.

It’s not a complete fix, but it signals a shift:

from reacting to harm → to preventing deception.

Why This Matters for Future Lawyers

Deepfakes force the law into uncomfortable territory:

- Evidence can no longer be taken at face value

- Identity itself becomes easier to manipulate

- Legal responsibility becomes harder to pin down

And perhaps most importantly:

truth is no longer visually obvious.

The law has always dealt with lies.

What’s new is how convincing those lies have become.

For law students this is more than a tech issue, it’s a fundamental challenge to how evidence, responsibility, and reality itself are understood in legal systems.

More for You

How to Think Like a Law Student (And Why It’s Actually a...

Is Law Really That Hard? What Studying Law Is Actually Like

Diploma vs Degree vs Foundation: Which Path Is Right for You?

What University Life Is Really Like (And How to Thrive from Day...